The recent NVDIMM webcasts on the SNIA BrightTALK Channel sparked many questions from the almost 1,000 viewers who have watched it live or downloaded the on-demand cast. Now, NVDIMM SIG Chairs Arthur Sainio and Jeff Chang answer 35 of them in this blog. Did you miss the live broadcasts? No worries, you can view NVDIMM and other webcasts on the SNIA webcast channel https://www.brighttalk.com/channel/663/snia-webcasts.

Sainio and Jeff Chang answer 35 of them in this blog. Did you miss the live broadcasts? No worries, you can view NVDIMM and other webcasts on the SNIA webcast channel https://www.brighttalk.com/channel/663/snia-webcasts.

FUTURES QUESTIONS

What timeframe do you see server hardware, OS, and applications readily adopting/supporting/recognizing NVDIMMs?

DDR4 server and storage platforms are ready now. There are many off-the shelf server and/or storage motherboards that support NVDIMM-N.

Linux version 4.2 and beyond has native support for NVDIMMs. All the necessary drivers are supported in the OS.

NVDIMM adoption is in progress now.

Technical Preview 5 of Windows Server 2016 has NVDIMM-N support

How, if at all, does the positioning of NVDIMM-F change after the eventual introduction of new NVM technologies?

If 3DXP is successful it will likely to have a big impact on NVDIMM-F. 3DXP could be seen as an advanced version of a NVDIMM-F product. It sits directly on the DDR4 bus and is byte addressable.

NVDIMM-F products have the challenge of making them BYTE ADDRESSBLE, depending on what kind of persistent media is used.

If NAND flash is used, it would take a lot of techniques and resources to make such a product BYTE ADDRESSABLE.

On the other hand, if the new NVM technologies bring out persistent media that are BYTE ADDRESSABLE then the NVDIMM-F could easily use them for their backend.

How does NVDIMM-N compare to Intel’s 3DXPoint technology?

At this point there is limited technical information available on 3DXP devices.

When the specifications become available the NVDIMM SIG can create a comparison table.

NVDIMM-N products are available now. 3DXP-based products are planned for 2017, 2018. Theoretically 3DXP devices could be used on NVDIMM-N type modules

PERFORMANCE AND ENDURANCE QUESTIONS

What are the NVDIMM performance and endurance requirements?

NVDIMM-N is no different from a RDIMM under normal operating conditions. The endurance of the Flash or NVM technology used on the NVDIMM-N is not a critical factor since it is only used for backup.

NVDIMM-F would depend on various factors: (1) is the backend going to be NAND Flash or some other entity? (2) What kind of access pattern is going to be done by the application? The performance must be at least same as that of NVDIMM-N.

Are there endurance requirements for NVDIMM-F? Won’t the flash wear out quickly when used as memory?

Yes, the aspect of Flash being used as a RANDOM access device with MEMORY access characteristics would definitely have an impact on the endurance.

NVDIMM-F – Doesn’t the performance limitations of the NAND vs. DRAM effect the application?

NAND Flash would never hit the performance requirements of the DRAM when seen as an entity to entity comparison. But, in the whole perspective of a wider solution, the data path of DRAM data -> Persistence Data in a traditional model would have more delays contributed by a good number of software layers involved in making the data persistent versus, in the NVDIMM-F the data that is instantly persistent — for just a short term additional latency.

Is there extra heat being generated….does it need any other cooling (NVDIMM-F, NVDIMM-N)

No

In general, our testing of NVDIMM-F vs PCIe based SSDs has not shown the expected value of NVDIMMs. The PCIe based NVMe storage still outperforms the NVDIMMs.

TBD

What is the amount of overhead that NVDIMMs are adding on CPUs?

None at normal operation

What can you say about the time required typically to charge the supercaps? Is the application aware of that status before charge is complete?

Approximately two minutes depending on the density of the NVDIMM and the vendor.

The NVDIMM will not be ready because the charging status and in turn the system BIOS will wait; until it times out if the NVDIMM is not functioning.

USE QUESTIONS

What will happen if a system crashes then comes back before the NVDIMM finishes backup? How the OS know what to continue as the state in the register/L1/L2/L3 cache is already lost?

When system comes back up, it will check if there is valid data backed up in the NVDIMM. If yes, backed up data will be restored first before the BIOS sets up the system.

The OS can’t depend on the contents of the L1/L2/L3 cache. Applications must do I/O fencing, use commit points, etc. to guarantee data consistency.

Power supply should be able to hold power for at least 1ms after the warning of AC power loss.

Is there garbage collection on NVDIMMs?

This depends on individual vendors. NVDIMM-N may have overprovisioning and wear levering management for the NAND Flash.

Garbage collection really only makes sense for NVDIMM-F.

How is byte addressing enabled for NAND storage?

By default, the NAND storage can be addressed only through the BLOCK mode addressing. If BYTE addressability is desired, then the DDR memory at the front must provide sophisticated CACHING TECHNIQUES to trick the Host Memory Controller in to thinking that it is actually accessing a larger capacity DDR memory.

Is the restore command issued over the I2C bus? Is that also known as the SMBus?

Yes, Yes

Could NVDIMM-F products be used as both storage and memory within the same server?

NVDIMM-F is by definition only block storage. NVDIMM-P is both (block) storage and memory.

COMPATIBILITY QUESTIONS

Is NVDIMM-N support built into the OS or do the NVDIMM vendors need to provide drivers? What OS’s (Windows version, Linux kernel version) have support?

In Linux, right from 4.2 version of the Kernel, the generic NVDIMM-N support is available.

All the necessary drivers are provided in the OS itself.

Regarding the Linux distributions, only Fedora and Ubuntu have upgraded themselves to the 4.x kernel.

The crucial aspect is, the BIOS/MRC support needed for the vendor specific NVDIMM-N to get exposed to the Host OS.

MS Windows has OS support – need to download.

What OS support is available for NVDIMM-F? I’m assuming some sort of drivers is required.

Diablo has said they worked the BIOS vendors to enable their Memory1 product. We need to check with them.

For other NVDIMM-F vendors they would likely require drivers.

As of now no native OS support is available.

Will NVDIMMs work with typical Intel servers that are 2-3 years old? What are the hardware requirements?

The depends on the CPU. For Haswell, Grantley, Broadwell, and Purley the NVDIMM-N are and/or will be supported

The hardware requires that the CPLD, SAVE, and ADR signals are present

Is RDMA compatible with NVDIMM-F or NVDIMM-N?

The RDMA (Remote Direct Memory Access) is not available by default for NVDIMM-N and NVDIMM-F.

A software layer/extension needs to be written to accommodate that. Works are in progress by the PMEM committee (www.pmem.io) to make the RDMA feature available transparently for the applications in the future.

SNIA Reference: http://www.snia.org/sites/default/files/SDC15_presentations/persistant_mem/ChetDouglas_RDMA_with_PM.pdf

What’s the highest capacity that an NVDIMM-N can support?

Currently 8GB and 16GB but this depends on individual vendor’s roadmaps.

COST QUESTIONS

What is the NVDIMM cost going to look like compared to other flash type storage options?

This relates directly to what types and quantizes of Flash, DRAM, controllers and other components are used for each type.

MISCELLANEOUS QUESTIONS

How many vendors offer NVDIMM products?

AgigA Tech, Diablo, Hynix, Micron, Netlist, PNY, SMART, and Viking Technology are among the vendors offering NVDIMM products today.

Is encryption on the NVDIMM handled by the controller on the NVDIMM or the OS?

Encryption on the NVDIMM is under discussion at JEDEC. There has been no standard encryption method adopted yet.

If the OS encrypts data in memory the contents of the NVDIMM backup would be encrypted eliminating the need for the NVDIMM to perform encryption. Although because of the performance penalty of OS encryption, NVDIMM encryption is being considered by NVDIMM vendors.

Are memory operations what is known as DAX?

DAX means Direct Access and is the optimization used in the modern file systems – particularly EXT4 – to eliminate the Kernel Cache for holding the write data. With no intermediate cache buffers, the write operations go directly to the media. This makes the writes persistent as soon as they are committed.

Can you give some practical examples of where you would use NVDIMM-N, -F, and –P?

NVDIMM-N: load/store byte access for journaling, tiering, caching, write buffering and metadata storage

NVDIMM-F: block access for in-memory database (moving NAND to the memory channel eliminates traditional HDD/SSD SAS/PCIe link transfer, driver, and software overhead)

NVDIMM-P: can be used either NVDIMM-N or –F applications

Are reads and writes all the same latency for NVDIMM-F?

The answer depends on what kind of persistent layer is used. If it is the NAND flash, then the random writes would have higher latencies when compared to the reads. If the 3D XPoint kind of persistent layer is used, it might not be that big of a difference.

I have interest in the NVDIMMs being used as a replacement for SSD and concerns about clearing cache (including credentials) stored as data moves from NVM to PM on an end user device

The NVDIMM-N uses serialization and fencing with Intel instructions to guarantee data is in the NVDIMM before a power failure and ADR.

I am interested in how many banks of NVDIMMs can be added to create a very large SSD replacement in a server storage environment.

NVDIMMs are added to a system in memory module slots. The current maximum density is 16GB or 32GB. Server motherboards may have 16 or 24 slots. If 8 of these slots have 16GB NVDIMMs that should be like a 96GB SSD.

What are the environmental requirements for NVDIMMs (power, cooling, etc.)?

There are some components on NVDIMMs that have a lower operating temperature than RDIMMs like flash and FPGA devices. Refer to each vendor’s data sheet for more information. Backup Energy Sources based on ultracapacitors require health monitoring and a controlled thermal environment to ensure an extended product life.

How about data-at-rest protection management? Is the data in NVDIMM protected/encrypted? Complying with TCG and FIPS seems very challenging. What are the plans to align with these?

As of today, encryption has not been standardized by JEDEC. It is currently up to each NVDIMM vendor whether or not to provide encryption..

Could you explain the relationship between the NVDIMM and the IO stack?

In the PMEM mode, the Kernel presents the NVDIMM as a reserved memory, directly accessible by the Host Memory Controller.

In the Block Mode, the Kernel driver presents the NVDIMM as a block device to the IO Block Layer.

With NVDIMMs the data can be in memory or storage. How is the data fragmentation managed?

The NVDIMM-N is managed as regular memory. The same memory allocation fragmentation issues and handling apply. The NVDIMM-F behaves like an SSD. Fragmentation issues on an NVDIMM-F are handled like an SSD with garbage collection algorithms.

Is there a plan to support PI type data protection for NVDIMM data? If not, achieving E2E data protection cannot be attained.

As of today, encryption has not been standardized by JEDEC. It is currently up to each NVDIMM vendor whether or not to provide encryption.

Since NVDIMM is still slower than DRAM so we still need DRAM in the system? We cannot get rid of DRAM yet?

With NVDIMM-N DRAM is still being used. NVDIMM-N operates at the speed of standard RDIMM

With NVDIMM-F modules, DRAM memory modules are still needed in the system.

With NVDIMM-P modules, DRAM memory modules are still needed in the system.

Can you use NVMe over ethernet?

NVMe over Fabrics is under discussion within SNIA http://www.snia.org/sites/default/files/SDC15_presentations/networking/WaelNoureddine_Implementing_%20NVMe_revision.pdf

New on the Solid State Storage website is a whitepaper from analysts Tom Coughlin of Coughlin Associates and Jim Handy of Objective Analysis which details what IT manager requirements are for storage performance. The paper examines how requirements have changed over a four-year period for a range of applications, including databases, online transaction processing, cloud and storage services, and scientific and engineering computing. Read More

New on the Solid State Storage website is a whitepaper from analysts Tom Coughlin of Coughlin Associates and Jim Handy of Objective Analysis which details what IT manager requirements are for storage performance. The paper examines how requirements have changed over a four-year period for a range of applications, including databases, online transaction processing, cloud and storage services, and scientific and engineering computing. Read More

New on the Solid State Storage website is a whitepaper from analysts Tom Coughlin of Coughlin Associates and Jim Handy of Objective Analysis which details what IT manager requirements are for storage performance. The paper examines how requirements have changed over a four-year period for a range of applications, including databases, online transaction processing, cloud and storage services, and scientific and engineering computing. Read More

New on the Solid State Storage website is a whitepaper from analysts Tom Coughlin of Coughlin Associates and Jim Handy of Objective Analysis which details what IT manager requirements are for storage performance. The paper examines how requirements have changed over a four-year period for a range of applications, including databases, online transaction processing, cloud and storage services, and scientific and engineering computing. Read More

c Storage, and SMB3 plugfests; ten Birds-of-a-Feather Sessions, and amazing networking among 450+ attendees. Sessions on NVMe over Fabrics won the title of most attended, but Persistent Memory, Object Storage, and Performance were right behind. Many thanks to SDC 2016 Sponsors, who engaged attendees in exciting technology discussions.

c Storage, and SMB3 plugfests; ten Birds-of-a-Feather Sessions, and amazing networking among 450+ attendees. Sessions on NVMe over Fabrics won the title of most attended, but Persistent Memory, Object Storage, and Performance were right behind. Many thanks to SDC 2016 Sponsors, who engaged attendees in exciting technology discussions. You’ll want to stream keynotes from Citigroup, Toshiba, DSSD, Los Alamos National Labs, Broadcom, Microsemi, and Intel – they’re available now on demand on SNIA’s YouTube channel,

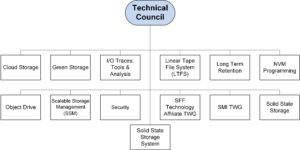

You’ll want to stream keynotes from Citigroup, Toshiba, DSSD, Los Alamos National Labs, Broadcom, Microsemi, and Intel – they’re available now on demand on SNIA’s YouTube channel,  Mark Carlson is the current Chair of the SNIA Technical Council (TC). Mark has been a SNIA member and volunteer for over 18 years, and also wears many other SNIA hats. Recently, SNIA on Storage sat down with Mark to discuss his first nine months as the TC Chair and his views on the industry.

Mark Carlson is the current Chair of the SNIA Technical Council (TC). Mark has been a SNIA member and volunteer for over 18 years, and also wears many other SNIA hats. Recently, SNIA on Storage sat down with Mark to discuss his first nine months as the TC Chair and his views on the industry.

can get a “sound bite” of what to expect by downloading SDC podcasts via

can get a “sound bite” of what to expect by downloading SDC podcasts via